Review Article - Imaging in Medicine (2009) Volume 1, Issue 1

Radiation dose reduction in computed tomography: techniques and future perspective

Lifeng Yu†, Xin Liu, Shuai Leng, James M Kofler, Juan C Ramirez-Giraldo, Mingliang Qu, Jodie Christner, Joel G Fletcher & Cynthia H McColloughDepartment of Radiology, Mayo Clinic, 200 First Street SW, Rochester, MN 55905, USA

- Corresponding Author:

- Lifeng Yu

Department of Radiology, Mayo Clinic

200 First Street SW, Rochester, MN 55905, USA

Tel: +1 507 284 6354

Fax: +1 507 284 4132

E-mail: yu.lifeng@mayo.edu

Abstract

Keywords

computed tomography ▪ CT ▪ CT technology ▪ radiation dose reduction ▪ radiation risk

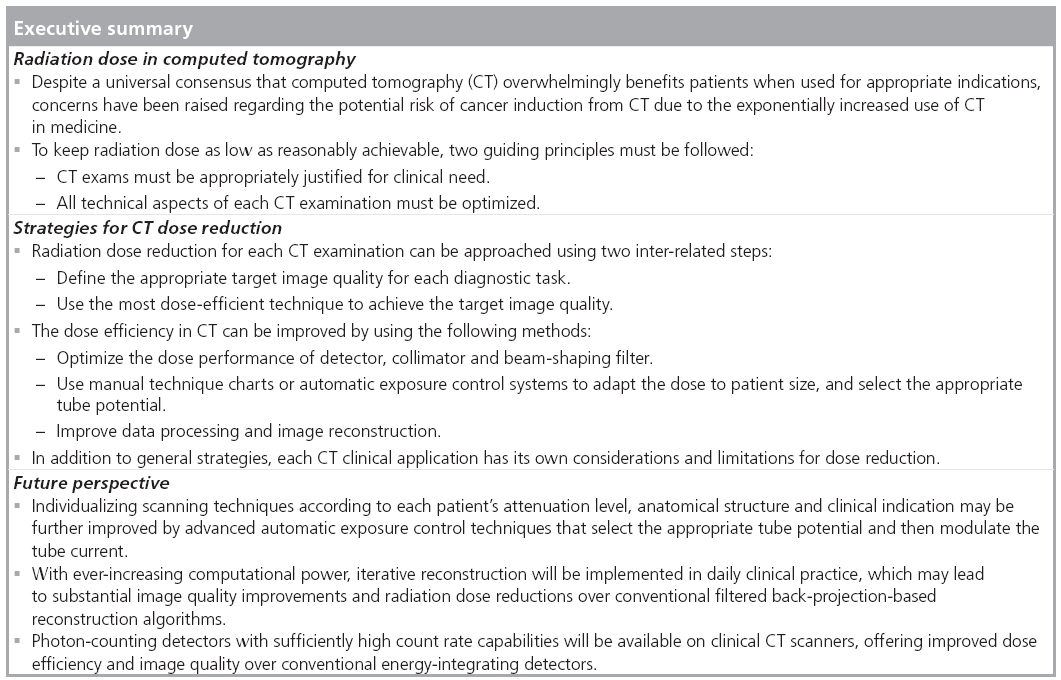

Since its introduction in 1973 [1], x-ray computed tomography (CT) has established itself as a primary diagnostic imaging modality. Subsequent to the introduction of helical scanning technique in the late 1980s [2,3] and the advent of multidetector-row technology in the late 1990s [4], the number and impact of clinical applications of CT have continued to grow. With its fast scanning speed and isotropic spatial resolution at 0.3–0.4 mm, CT allows physicians to diagnose injuries and disease more quickly, safely and accurately than alternative more invasive or less sensitive imaging techniques. CT imaging also plays a major role in the staging, treatment planning and follow-up of cancer. It is because of this tremendous value of CT that its use is now pervasive in modern medical practice. It is estimated that 67 million CT examinations were performed in 2006 in the USA [301], up from 3 million in 1980 [5].

However, despite the clear evidence that CT provides invaluable information for diagnosis and patient management, a potential risk of radiation-induced malignancy exists [6]. CT alone contributes almost one half of the total radiation exposure from medical use and one quarter of the average radiation exposure per capita in the USA [301]. A series of recent articles used the total radiation dose received by the entire US population from CT to estimate the potentially attributable cancer incidence or mortality in the whole population [5,7,8]. One study suggested that as much as 1.5–2% of cancers may eventually be caused by the radiation dose currently used in CT [5]. These estimates, however, remain highly controversial [9], since the cancer-risk model used in these articles relies on the National Academies of Science’s report on the biological effects of ionizing radiation [6], which notes that the statistical limitations of available data make it difficult to evaluate cancer risk in humans at low doses (<100 mSv), which is a factor of ten to 100 higher than the effective dose from a typical CT examination. More importantly, the benefit to the patient of an appropriately indicated CT scan was not taken into account by these articles, particularly considering that the majority of the total radiation dose from CT to the US population was received by older or symptomatic populations. In these individuals, the health benefit of a timely, accurate and noninvasive diagnosis that CT facilitates far exceeds the estimated potential risk [9].

Nevertheless, since the cancer risk associated with the radiation dose in CT is not zero, it is clear that reducing radiation dose in CT must continue to be one of the top priorities of the CT community, particularly in light of the continued increase in the number of CT examinations performed annually in the USA. Two guiding principles must be followed [10]. First, CT examinations must be appropriately justified for each individual patient [11,302]. The requesting clinicians and radiologists share the major responsibility to direct patients to the most appropriate imaging modality for the required diagnostic task. Second, for each CT examination, all technical aspects of the examination must be optimized, such that the required level of image quality can be obtained while keeping the doses as low as possible.

This article will focus on the second guiding principle and summarize the general technological strategies that are commonly used for radiation dose reduction in CT practice. Several clinical areas, including pediatric, cardiac, dualenergy, perfusion and interventional CT, will be discussed specifically. Some perspectives on future CT dose-reduction techniques are presented.

Quantifying CT radiation dose & associated risk

Radiation dose in CT can be quantified in a variety of ways. Scanner radiation output, organ dose and effective dose are several of the more common dose metrics. The scanner radiation output is currently represented by the volume CT dose index (CTDIvol), which describes the radiation output of the scanner in a very standardized way, making use of two standardized acrylic phantoms [12]. The head and body CTDI phantoms are 16 and 32 cm in diameter, respectively, and have a length of 14 cm. The SI units are mGy. CTDIvol and related quantities, such as weighted CTDI (CTDIw) and dose length product, are currently widely used for quality assurance testing and to describe and optimize the radiation output of a specific scanning technique. It is important to realize that these dose metrics are not a direct measurement of patient dose; they are a standardized dose metric to represent scanner output levels, when measured in a standardized phantom. With the increased width of detector collimation and the introduction of cone-beam CT scanners, the accuracy of CTDI-based CT dosimetry is in question [13–16].

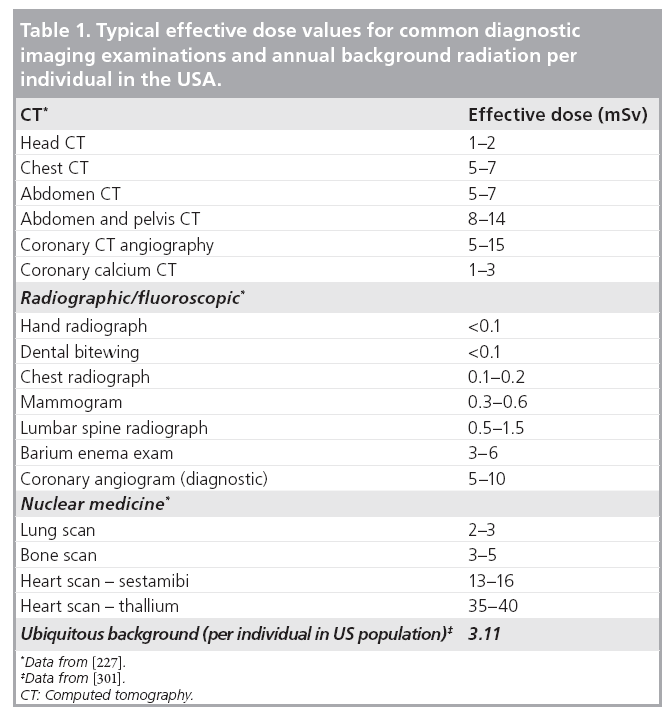

Organ dose and organ-, age- and sex-specific risk data were recommended to quantify the radiation risk to that organ for patients undergoing CT examinations [17]. Effective dose [18,19], typically expressed in the units of mSv, is a quantity representing a ‘whole-body equivalent’ dose that would have a similar risk of health detriment as that due to a partial body irrradiation [20]. Effective dose allows an approximate comparison of radiation-induced risk among different types of examinations [21]. Table 1 lists typical effective dose values in some common diagnostic imaging examinations. Effective dose can be calculated as a weighted sum of the equivalent doses in tissues and organs that are sensitive to radiation, where the weighting factor is determined by the relative risk of health detriment of each organ [21,22]. It can be seen that effective dose is not a quantity that measures radiation dose, but rather a concept that reflects the stochastic radiation risk to a reference patient.

Since organ dose assessment is not an easy task, a more straightforward and widely employed approach to calculating effective dose is to simply multiply dose length product by some empirically determined conversion factors. It should be emphasized that current concept of effective dose is based on a mathematical model for a ‘standard’ body [23], which is neither age nor gender specific [24]. Patient-specific Monte Carlo simulation software packages have been developed recently [24–27].

At low dose levels, which are defined to be those less than 100 mSv, there are considerable uncertainties in evaluating the risk of developing a radiation- induced cancer [6]. A ‘linear no-threshold’ (LNT) model was recommended in a report from National Academies of Science [6], who believe that the risk of cancer proceeds in a linear fashion at low doses without a threshold; thus, the smallest dose is assumed to have the potential to cause a small increase in cancer risk. Although the LNT model remains unproven, it is used as a basis for radiation protection in order to overprotect, rather than underprotect. Based on the LNT model and the information available from epidemiologic studies on atomic-bomb survivors and nuclear workers exposed to low dose rates, it is estimated that the average lifetime attributable risk of incidence and mortality for all cancers from an exposure of 10 mSv is approximately 0.1 and 0.05%, respectively [6]. Such an estimated risk is much lower than the incidence and mortality rate of naturally occurring cancers, which are approximately 42 and 20%, respectively [6].

Image quality & radiation dose

Radiation dose is one of the most significant factors determining CT image quality and thereby the diagnostic accuracy and the outcome of a CT examination. Radiation dose should only be reduced under the condition that the diagnostic image quality is not sacrificed. Therefore, to understand how the radiation dose in CT can be reduced, it is necessary to be familiar with the relationship between image quality and radiation dose.

There are several metrics describing different aspects of image quality in CT. Noise describes the variation of CT numbers in a physically uniform region. High-contrast spatial resolution, both in-plane and cross-plane (along the z-axis), quantifies the minimum size of high-contrast object that can be resolved. Low-contrast spatial resolution quantifies the minimum size of low-contrast object that can be differentiated from the background, which is related both to the contrast of the material and the noise-resolution properties of the system. Contrast-to-noise ratio (CNR) and signal-tonoise ratio (SNR) are also common metrics to quantify the overall image quality.

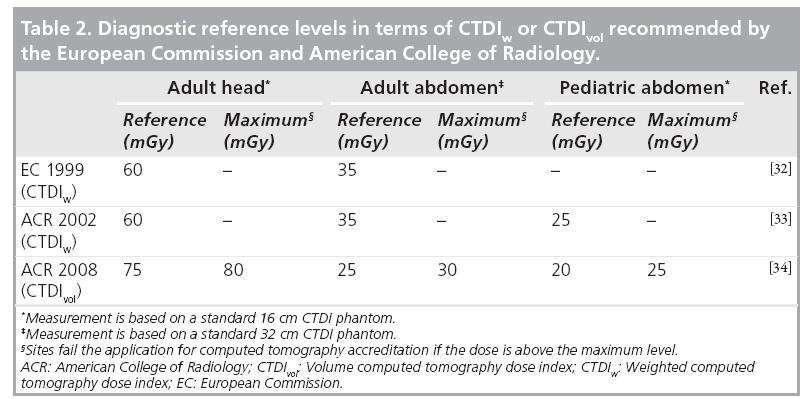

The goal of dose reduction can be approached from the following two perspectives. The first perspective is to appropriately define the target image quality for each specific diagnostic task, not requiring lower noise or higher spatial resolution than necessary. For example, CT colonography mainly involves the detection of soft-tissue-like polyps from a background consisting of air and contrast-tagged stool (i.e., a high-contrast situation) [28,29], so the noise level is allowed to be high and the dose can be relatively low without sacrificing the diagnostic confidence. Alternatively, brain and liver/pancreas CT involve the detection and characterization of low-contrast lesions, which require a relatively low noise level and thus higher dose. As simple as it may sound, finding the appropriate target image quality demands a thorough understanding of the image quality requirements for each diagnostic task and how the radiation dose, as determined by scanning parameters such as tube current, scan time, helical pitch and tube potential, is related to image quality. This is a challenging task owing to the complexity of clinical examinations, variations in observer preferences and differences in performance among scanners. Consensus agreement on requirements for image quality exist in the form of guidelines and standards, although precise quantitative requirements are detailed for only a very few examinations [30]. Coronary artery calcium scoring is an examination where quantitative recommendations of a target noise level do exist [31]. A more commonly used method to define the target image quality is to determine diagnostic reference levels using an easily measured and standardized quantity, such as CTDIw or CTDIvol, which indirectly define the target image quality. Diagnostic reference levels do not indicate the desired dose level for a specific diagnostic task, but rather define a reference dose, above which users should investigate the potential for dose-reduction measures. Diagnostic reference levels have been established by the European Commission (EC) and several of its member states, as well as the American College of Radiology (ACR), for adult head, adult abdomen and pediatric abdomen (Table 2) [32–34]. The ACR also introduced maximum dose levels for each of these three common examination types. Those sites having CTDIvol above these values would fail the application for CT accreditation, as 90% of US scanner installations are able to obtain clinically acceptable levels of image quality below this maximum value.

The second perspective on dose reduction is to improve some aspects of image quality, such as reducing image noise, which can then be implemented in order to allow radiation dose reduction. This task can be accomplished by optimizing the CT system and scanning techniques, and improving the image reconstruction and data processing.

The rest of the article will review various dosereduction strategies, all of which are related to either or both of the two perspectives mentioned earlier. The implementation of these strategies require collaborative efforts from all stakeholders, including referring physicians, radiologists, medical physicists, technologists, regulatory organizations and manufacturers.

General dose-reduction strategies

CT system optimization

A tremendous effort has been spent on improving the dose efficiency of CT systems, which is related to many system components, including the detector, collimator and beam-shaping filter.

Detector

The x-ray detector is probably the most important determinant of the dose performance of a CT system. Two dose-relevant characteristics of a detector are quantum detection efficiency and geometrical efficiency, which together describe the effectiveness of the detector on converting incident x-ray energy into signals. Solid state detectors (e.g., Gd2O2S) are currently widely used in place of the xenon gas detectors that were common in the 1980s, mainly owing to the higher quantum detection efficiency. Detectors with rapid response and low afterglow are also desirable to allow fast scanning speed and high image quality [35].

One of the main obstacles to reducing radiation dose is image noise. Noise in CT has two principal sources: quantum noise and electronic noise. The quantum noise is determined by the number of photons incident and collected by the detector. The electronic noise is the result of fluctuation in the electronic components of the data acquisition system. When the number of photons is reduced to the level where the detected signal is as small as signal from electronic noise, the images will have significantly degraded quality. Photon starvation artifacts occur in low-dose situations or when the patient size is big but photon flux is limited. Therefore, it is desirable to reduce the level of electronic noise in order to improve the image quality in low-dose examinations, which requires the refinement of all electronic components in the x-ray detection system.

Collimators

Prepatient collimators are positioned between the x-ray source and the patient to define the x-ray beam coverage and avoid unnecessary radiation dose to patients. Since the x-ray tube focal spot is not a point source, there are regions of the beam coverage that are only partially irradiated. This region is referred to as the penumbra region, as opposed to the umbra region that is fully irradiated by the x-ray source. In multislice CT, the penumbra region does not contribute to image formation and represents wasted dose to the patient. Geometric efficiency was introduced as a measure of radiation utilization along a detector-row direction, which is defined as the ratio between the integral of single scan dose profile within the range of the active detector width and the integral of the same dose profile over the total length along a detector-row direction [12]. Geometric efficiency is dependent on the detector collimation and focal spot size [36]. With the increased width of detector collimation, the geometric efficiency increases and the dose utilization is improved.

Postpatient collimators are those located between the patient and the detector, often just in front of the detector to reject scattered radiation, which improves the image quality but sacrifices dose efficiency. Careful assessment of the tradeoff between dose and image quality should be performed to optimize the design of scatter-rejection collimators [37].

Recent progress in collimator design involves reducing the amount of overscanning in helical CT. Overscanning is required in helical CT to provide sufficient data on each side of the reconstruction volume to allow image reconstruction at the beginning and end of the scan range. The overscanning depends on several parameters such as detector collimation and helical pitch. When the detector collimation becomes wider in modern multislice CT scanners, more and more wasted radiation dose is delivered by overscanning [38–40]. In addition to a software solution to this issue [41], Stierstorfer et al. proposed a hardware solution where the collimator dynamically blocks the unnecessary x-ray beam [42].

Dose reductions of up to 40% were reported, particularly for high pitch and small scanning ranges [43].

X-ray beam-shaping filter

X-ray beam-shaping is an important consideration for dose performance of a CT system. The x-ray beam filter is a physical object that attenuates and ‘hardens’ the beam spectra so that the x-ray beam is hard enough to efficiently penetrate the patient yet still provides sufficient contrast information. Since the cross-section of patients is typically oval in shape, the attenuation in the peripheral region is less than that passing through the central region. Some filters are designed to be a special shape (e.g., bowtie) in order to reduce the incident x-ray intensity in the peripheral region so that the radiation dose to the patient, especially the skin dose, can be minimized. Many novel filters for different clinical applications, including head, body, pediatric and cardiac, have been used in modern CT scanners to reduce peripheral radiation dose [44,45].

Scan range

Since scan range is directly related to the total radiation dose delivered to the patient, it is important to keep the scan range as small as possible and as large as necessary in order to avoid the direct radiation exposure of any regions of the body that are not necessary for diagnosis. This is probably the simplest method to keep dose as low as possible.

Automatic exposure control

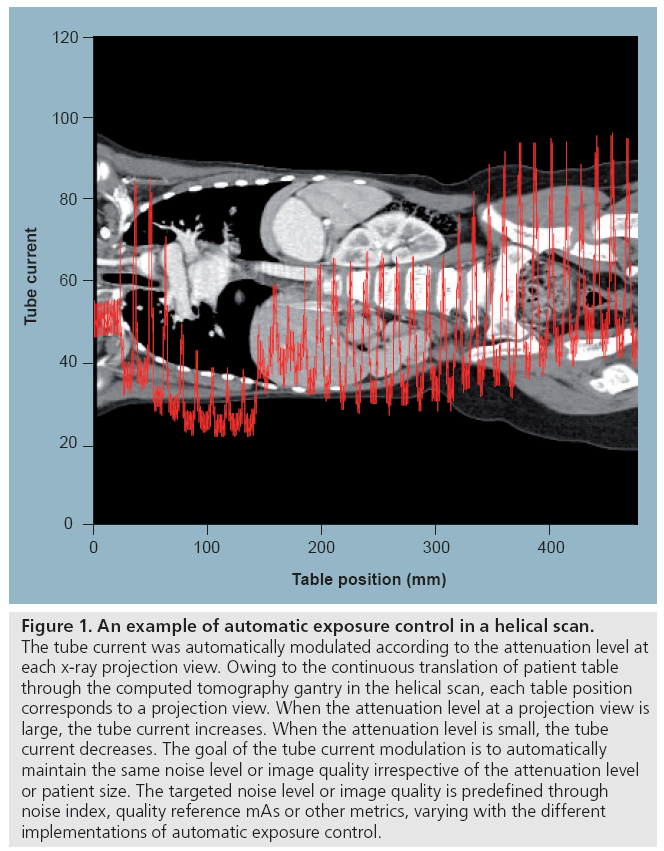

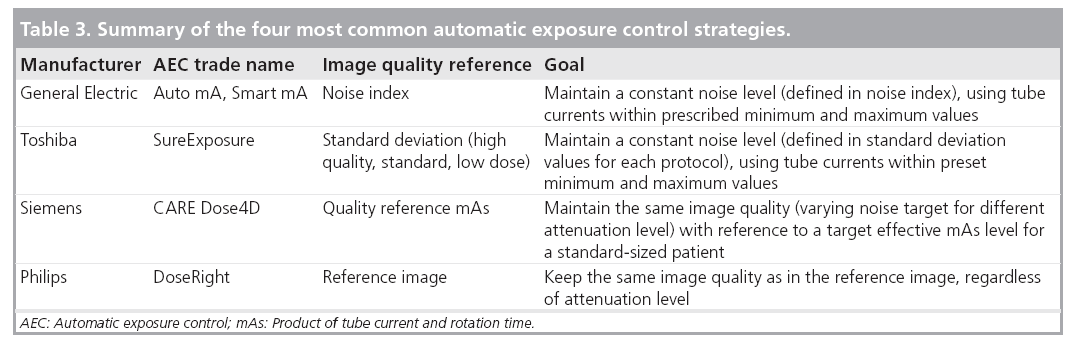

One common method to optimize radiation dose is to adjust the x-ray tube current using weight- or size-based protocols [46–51]. For this method, the patient’s size or weight is measured and the appropriate tube current is then determined from a chart, referred to as a technique chart. A more advanced technique is automatic exposure control (AEC), which aims to automatically modulate the tube current to accommodate differences in attenuation due to patient anatomy, shape and size [52–56]. The tube current may be modulated as a function of projection angle (angular modulation), longitudinal location along the patients (z-modulation) or both. Determination of the tube current modulation may be fully preprogrammed, occur in near-real time by using an online feedback mechanism, or use a combination of preprogramming and a feedback loop. Figure 1 shows an example of tube current modulation for a pediatric chest/ abdomen/pelvis examination.

Figure 1: An example of automatic exposure control in a helical scan. The tube current was automatically modulated according to the attenuation level at each x-ray projection view. Owing to the continuous translation of patient table through the computed tomography gantry in the helical scan, each table position corresponds to a projection view. When the attenuation level at a projection view is large, the tube current increases. When the attenuation level is small, the tube current decreases. The goal of the tube current modulation is to automatically maintain the same noise level or image quality irrespective of the attenuation level or patient size. The targeted noise level or image quality is predefined through noise index, quality reference mAs or other metrics, varying with the different implementations of automatic exposure control.

The intent of AEC is to use the optimal radiation level for any patient to achieve adequate image quality for a given diagnostic task. For smaller patients, less tube current, and therefore less dose, is sufficient to obtain the desired image quality. For larger patients, to ensure adequate image quality, the radiation dose must be increased.

Automatic exposure control systems are now available on scanners from each of the major manufacturers. Although the underlying principle of AEC is the same, each is implemented somewhat differently in terms of the strategy to define the target image quality. Table 3 gives a summary of the different implementations from four major CT vendors. CT users must be familiar with these techniques to ensure correct use. Inappropriate use may lead to an increase of patient dose or a sacrifice in image quality. One important question is whether it is necessary to keep the same target noise level for different patient sizes. In practice, radiologists often do not find the same noise level acceptable in smaller patients as in larger patients [57]. Lower noise images with thinner slice thicknesses are required in children relative to adults because of the decreased amount of adipose tissue between organs and tissue planes and the smaller anatomic dimensions of pediatric patients. The use of a constant noise target may lead to unacceptable image quality in small patients and excessive radiation dose in large patients [58].

Optimal tube potential

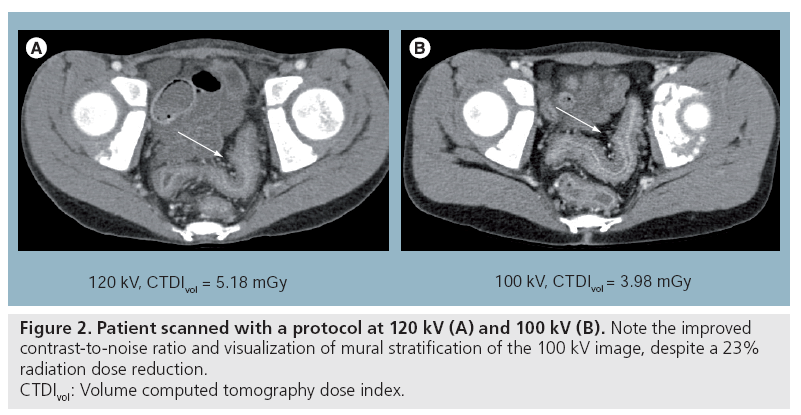

A number of recent physics and clinical studies have demonstrated various levels of dose reduction or image quality improvement by using lower tube potentials (kV) in CT imaging [59–71]. Siegel et al. suggested that the image quality improvement of lower kV increases with the decrease of the phantom size [61]. Using both phantom and clinical studies, Funama et al. [65] and Nakayama et al. [72] have shown that it is possible to reduce the kV settings from 120 to 90 kV in abdominal CT without significantly sacrificing the low-contrast detectability when the patient weight is below 80 kg. Schindera et al. demonstrated the improved iodine CNR and reduced radiation dose at 80 kV for the detection of hypervascular liver tumors [73]. Figure 2 compares images from an 11-year-old patient scanned with a 120 kV protocol and a 100 kV protocol. Note the improved contrast and visualization of mural stratification for the 100 kV image, despite a 23% reduction in radiation dose.

Figure 2: Patient scanned with a protocol at 120 kV (A) and 100 kV (B). Note the improved

contrast-to-noise ratio and visualization of mural stratification of the 100 kV image, despite a 23%

radiation dose reduction.

CTDIvol: Volume computed tomography dose index.

The underlying principle is that iodine has an increased attenuation (CT contrast) at lower tube potentials than at higher tube potentials in the tube potential range available on clinical CT scanners. In CT examinations involving the use of iodinated contrast media, the superior enhancement of iodine at lower tube potentials (e.g., renal and hepatic masses, and inflamed bowel segments) improves the conspicuity of hypervascular or hypovascular pathologies owing to the differential distribution of iodine [74].

Despite the increased contrast, the images obtained using lower tube potentials tend to be noisier, especially for larger-sized patients. This is mainly due to the higher absorption of lowenergy photons by the patient. Therefore, there is a tradeoff between image noise and contrast enhancement in determining the clinical value of lower tube potential CT imaging. When the patient size is above a particular threshold, the benefit of the improved contrast enhancement is negated by the increased noise level. In this situation, the lower tube potential may not generate better image quality than the higher tube potential for the same radiation dose. In other words, dose reduction may not be achievable with the lower tube potential because the higher tube potential is needed to maintain appropriate image quality. However, below this size threshold, various degrees of dose reduction or image quality improvement are achieved. Therefore, for a given patient size and clinical application, an optimal tube potential exists that yields the best image quality or the lowest radiation dose. This optimal tube potential is highly dependent on the patient size and the specific diagnostic task.

Noise control strategies in reconstruction & data processing

For a given diagnostic task and patient size, the dose reduction is primarily limited by the maximally allowable noise level. Optimally designed data processing and image reconstruction methods can generate images with lower noise levels without sacrificing other image properties, thus improving the overall image quality, which can further be translated into radiation dose reduction.

Many techniques have been developed for controlling noise in CT, operating on the raw projection measurements, the log-transformed sinogram or the images after reconstruction [75–83]. Image-based filtering techniques usually perform quite well with regards to reducing image noise while maintaining high-contrast resolution. Although some of these techniques have demonstrated some clinical benefit, particularly in vascular applications [83], image-based filtering usually changes the appearance of the CT image and sacrifices the low-contrast detectability. These techniques are widely available on most commercial scanners, but the performance requires careful evaluation before large-scale clinical use.

Instead of reducing noise in the image domain, many more sophisticated methods have recently been developed to control noise in the projection data domain prior to the image reconstruction [76,77,79–82,84]. These methods usually take into account photon statistics in the CT data, either directly on the raw measurement or preprocessed data. Some of them tried to smooth the projection data by optimizing a likelihood function or using an adaptive nonlinear filter based on the statistical model [79–82,84], which appear to be more promising for noise reduction than those methods working directly on the image domain.

The image reconstruction method and data utilization are also important factors for optimal noise control. A simple example is a full-scan fan-beam reconstruction using 360° of the projection data. Theoretically, half-scan reconstructions are possible with an angular range of data of 180° plus the fan-angle; half of the data used in a 360° full-scan reconstruction are redundant. However, the utilization of those redundant data is desirable in terms of reducing image noise.

Therefore, appropriately using all the available data in image reconstruction is essential to noise and dose reduction [85–87].

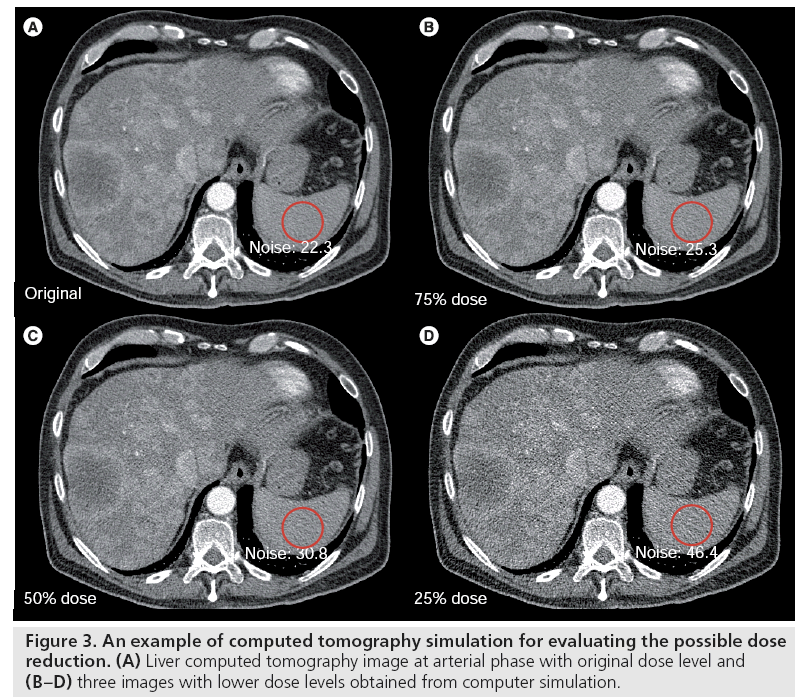

Lower dose simulation for scanning technique optimization

A common question in CT is ‘how much radiation dose can be reduced without sacrificing the diagnostic information?’ An efficient method to determine this answer is to insert realistic quantum and electronic noise in order to simulate CT examinations at different dose levels, allowing readers to review actual human data sets with real pathologies. In this manner, the diagnostic accuracy of the various dose levels (noise levels) can be determined, as opposed to selecting the noise level that looks more pleasing, but that may not be at the lowest dose required to perform the diagnostic task. Many studies have been performed using this technique to determine the lowest possible radiation dose level for various examination types [88–91]. Before the simulated low-dose images can be used for clinical evaluation, the fidelity of the low-dose simulation should be tested. Although modeling CT noise is very complicated [92], a simple Poisson model is often sufficient to generate reasonable fidelity in the synthesized low-dose images. Figure 3 displays a liver image with original dose level and three images with lower dose levels obtained from a computer simulation. Some researchers have also investigated the incorporation of spatial resolution into the simulation [93].

Figure 3: An example of computed tomography simulation for evaluating the possible dose reduction. (A) Liver computed tomography image at arterial phase with original dose level and (B–D) three images with lower dose levels obtained from computer simulation.

Examination-specific dose-reduction techniques

Pediatric CT

Mostly driven by the tremendously faster scanning speed and the ease with which accurate diagnostic information can be obtained with CT, the use of CT in pediatric patients has grown dramatically, reaching at least 4 million examinations in the USA in 2006 [5]. Minimization of radiation dose associated with pediatric CT examinations is of particular importance because the risk to children due to radiation exposure is two- to three-times greater than the risk to adults [6]. This is because children’s organs are more sensitive to radiation exposure and they have a much longer life expectancy relative to adults, thereby allowing more time for a potential radiation-induced cancer to develop.

To reduce radiation dose in pediatric CT, the most important first step is to carefully assess the risk and benefit of CT for each patient. When alternative imaging modalities with less or no radiation exposure are readily available and that can adequately answer the clinical question, these methods should be considered for use instead of the CT. In addition, multiphase examinations should be avoided if the information obtained from a single-phase scan is already sufficient [94].

When a CT examination is deemed necessary for a pediatric patient, scanning protocols specifically designed for children must be used. Following the wide realization of inappropriate use of adult techniques for children and small adults [95,303], a recent survey found that 98% of radiologists used weight-based tube current adjustments [96]. Adapting the dose level to different patient size has become a common practice in the CT community, which is further endorsed by the special requirement on pediatric CT technique in ACR accreditation [34].

Patient size-dependent scanning techniques include the use of AEC, manual technique charts and size-dependent bowtie filters. In AEC, the tube current is automatically modulated according to the patient size. The adjustment is based on target noise levels for different patient sizes. Lower noise images with thinner slice thickness in children are usually demanded. For body CT, a reduction in mAs of a factor of 4–5 from adult techniques is acceptable in infants [97]. For head CT, the mAs reduction from an adult to a newborn of approximately a factor of 2–2.5 is appropriate.

The use of lower tube potentials in pediatric patients to reduce radiation dose has been actively investigated recently [59–62]. Pediatric patients are less attenuating than adults, so the lower tube potential settings usually give better iodine contrast without significantly increasing the noise for the same radiation dose. Conversely, one could reduce the radiation dose and achieve the same or improved iodine CNR relative to 120 kV.

Several factors should be considered when lower tube potential techniques are used in practice. First, the tube current–time product (mAs) at lower tube potentials has to be increased appropriately relative to 120 kV in order to avoid excessive noise. Second, a fast rotation time and a high helical pitch are desirable in pediatric CT in order to reduce motion artifacts. Because of tube current limitations, the maximum achievable dose level (determined by maximum mAs/pitch) can also be limited, especially for lower tube potential settings. Therefore, a higher tube potential may still be necessary for bigger children, which demands a weightor size-based kV/mAs technique chart. Third, lower tube potential tends to generate more artifacts than higher tube potential in the presence of high attenuating object such as bright iodine contrast and bone owing to the more significant beam-hardening effect. In addition, lower tube potential may lead to increased noise and deceased contrast of soft tissues and other structures without iodine uptake. Thus, lower tube potential may not be appropriate for every examination and has to be carefully evaluated before its use.

Cardiac CT

With the greatly improved temporal and spatial resolution, cardiac CT encompasses a wide range of new clinical applications [98–100], including: evaluation of coronary artery disease [101], calcium scoring [31], bypass grafts [102], ventricular function [103], cardiac valve [104], viability/infarction [105] and myocardial perfusion [106].

Visualization of coronary arteries is probably the most challenging CT task, as it requires excellent temporal resolution to reduce motion artifacts caused by the beating heart, and a high spatial resolution to differentiate small coronary structures. These requirements pose a much higher demand for radiation dose than noncardiac CT imaging. First, half-scan data are usually used to reconstruct images in order to obtain the highest achievable temporal resolution [107,108], which require a higher dose than in a full-scan reconstruction for the same image noise. Second, the high spatial resolution also demands a higher radiation dose to maintain an acceptable image noise. Third, for retrospectively ECG-gated helical scan mode, in order to avoid anatomical discontinuities between contiguous heart cycles, the helical pitch is often very low [109]. These factors combine together making cardiac CT more dose intensive than other examinations [110].

Currently, retrospectively ECG-gated helical scan is the most commonly used scanning mode in cardiac CT mainly due to its clinical stability and flexibility. The x-ray beam is continuously on and the patient is translated through the gantry in a very slow speed (helical pitch ~0.2–0.3). The images are reconstructed by retrospectively selecting the sinogram data from the phase of the cardiac cycle that has the least motion. In a recent international analysis of 50 institutions performing coronary CT angiography, some sites had an average effective dose of 30 mSv; however, the median dose across all 1965 scans was 12 mSv, which is similar to a scan of the abdomen and pelvis [111]. A significant dosereduction technique in a helical cardiac scan is ECG tube-current pulsing [112], which involves modulating the tube current down to 4–20% of the full tube current for phases that are of minimal interest. Weustink et al. assessed the optimal width and timing of the ECG pulsing window at different heart rates and achieved up to 64% dose reduction without sacrificing image quality [113].

The dual-source (DS) CT scanner introduced in 2006 has a much improved temporal resolution of 83 ms because of simultaneously acquiring data from two x-ray sources, allowing cardiac scans at higher heart rates without use of a b-blocker to slow and stabilize the heart rate [114]. McCollough et al. evaluated the dose performance of a DS CT scanner in comparison with a 64-slice scanner and concluded that up to 50% dose reduction can be achieved on the DS CT scanner, depending on the heart rate [45]. This reduction of radiation is primarily due to the use of more aggressive ECG-pulsing window width and increased pitch for patients with higher heart rate. An additional cardiac bowtie filter and a 3D adaptive noise reduction filter [83] also contributed to the overall dose reduction.

A prospective ECG-triggered sequential (or step-and-shoot) scan is a more dose-efficient scanning mode for cardiac CT [115], particularly for single-phase studies. In this scanning mode, the x-ray is only turned on at the preselected phases during the cardiac cycle and therefore the dose is much reduced relative to the retrospectively ECG-gated scan mode. Stolzmann et al. evaluated the dose and image quality with a prospective ECG-triggered step-and-shoot protocol on a DS scanner and found that the mean effective doses were 1.2 and 2.6 mSv for patient groups with a BMI of less than 25 and 25–30 kg/m2, respectively [116]. Similar results were also obtained by Scheffel et al. [117]. Despite the low-dose performance, this scanning mode requires a relatively stable heart rate or some method by which the scanner can react to changes in heart rate and rescan the anatomic level where an ectopic beat occurred, if necessary.

Additional developments in CT technology that promise dose savings or image quality improvement include a 320-slice scanner with a 16-cm wide detector; this design allows for the coverage of the entire heart in one single rotation and one cardiac cycle [118,119]. The DS fast-pitch scanning mode also allows the full coverage of the whole heart in one cardiac cycle [120], which can be referred to as prospectively ECG-triggered helical scan mode. Both techniques have a better dose efficiency than retrospectively gated helical scan mode for acquiring a single-phase heart. The displacement artifacts caused by beat-to-beat inconsistencies that are common in scans over several heart cycles are eliminated. However, both techniques also have limitations. β-blockade is still required to stabilize heart rates at or below approximately 60 beats/min. For a 320-slice scanner, this is due to its relatively slow gantry rotation speed that limits its temporal resolution. For the DS fast-pitch scanning mode, this is because approximately 250 ms is required to cover the entire heart (~12 cm) with a pitch of 3.4, leaving only a small window in diastole in which to gather all the needed data. The fast-pitch scanning mode does not allow for multiphase reconstruction, as only one phase of the cycle was scanned. This, however, markedly reduces the dose, with effective dose estimates averaging approximately 1 mSv, which is as low as or lower than the effective dose from a head CT or a coronary artery calcium scan.

Lower tube potentials have also been used in cardiac CT for reducing radiation dose [121,122]. The principle is the same as that when it is used in pediatric CT and other clinical areas. For normal-sized patients, lower tube potentials yield better iodine contrast enhancement. Although the noise level also increases, the overall iodine CNR still improves, and therefore the diagnostic image quality is potentially still maintained. A reduction of radiation dose from 8.9 mSv at 120 kV to 6.7 mSv at 100 kV is reported [121]. However, for larger-sized patients, owing to the significantly increased noise level and artifacts, special attention has to be paid before use of lower tube potentials.

Dual-energy CT

Dual-energy CT [123] has regained a lot of attention recently [74,124] with the advent of new CT technologies, including DS [114,125], kV switching [126] and dual-layer detectors [127]. Several applications, such as bone removal, iodine quantification and kidney stone characterization, have been implemented clinically [124,128–131].

Dual-energy CT involves the acquisition of data at two tube potentials and the use of various dual-energy processing techniques to provide material-specific information [123,132,133]. One common question is whether dual-energy CT requires a higher dose than single-energy CT, acknowledging that a dual-energy scan provides material-specific information in addition to images that mimic a conventional single-energy scan. Either monochromatic images or mixed images blended from the low- and high-tube potential data acquisition can be generated from a dual-energy scan. One phantom study performed on a DS scanner demonstrated that as long as the patient is not too large, the images blended from the low- and high-tube potential data yield similar or even better iodine CNR than a typical 120 kV image acquired using the same radiation dose [134]. Thus, dual-energy CT can be performed routinely in small- to average-sized patients at the same dose level as single energy, yet provides more diagnostic information than conventional single-energy CT. Furthermore, projection-based monochromatic imaging from dual-energy CT promises images free of beam-hardening artifacts [123].

Another application of dual-energy CT for radiation dose reduction is virtual noncontrast imaging [124]. Many CT examinations involve repeated scans both before and after contrast injection. Dual-energy CT allows the creation of ‘virtual’ precontrast images from a postcontrast dual-energy scan, which has the potential to avoid the precontrast scan and thereby to reduce the total radiation dose. However, the amount of dose reduction is highly dependent on the image quality of the virtual noncontrast images created in a dual-energy postcontrast scan. Owing to the noise amplification during dual-energy processing [132], the virtual images often suffer from a high noise level. Greater separation between the lowand high-energy x-ray spectra may improve the image quality in virtual images and allow more significant radiation dose reduction [135].

CT perfusion

Computed tomography perfusion imaging may have a clinical role in neurovascular, cardiovascular and oncologic applications. Current techniques require the acquisition of at least 40 s of data for brain perfusion, 30 s of data for myocardial perfusion or 3 min of data for renal perfusion. The time–attenuation curve over the tissue/organ of interest is used, with various models of single- and multiple-compartment flow, in order to estimate parameters such as absolute blood flow and volume and vascular permeability [136–139]. Owing to the long scanning time, radiation dose in perfusion study is usually much higher than a routine CT scan. Skin injury (deterministic risk) is possible if the examination is improperly performed [140]. As a reference, the threshold for deterministic effects can be as low as 1000 mGy in a single dose [141]. After a 2000 mGy single dose, skin reddening may begin to occur [142]. In order to avoid such injury, the technique factors (e.g., mAs, kV) are often setup at a relatively lower level, such that the cumulative examination skin dose is below the threshold for skin injury. This, however, results in increased noise and artifact levels that may compromise the quantitative accuracy of the technique. Hence, methods to decrease image noise at the same or decreased dose levels are highly desirable.

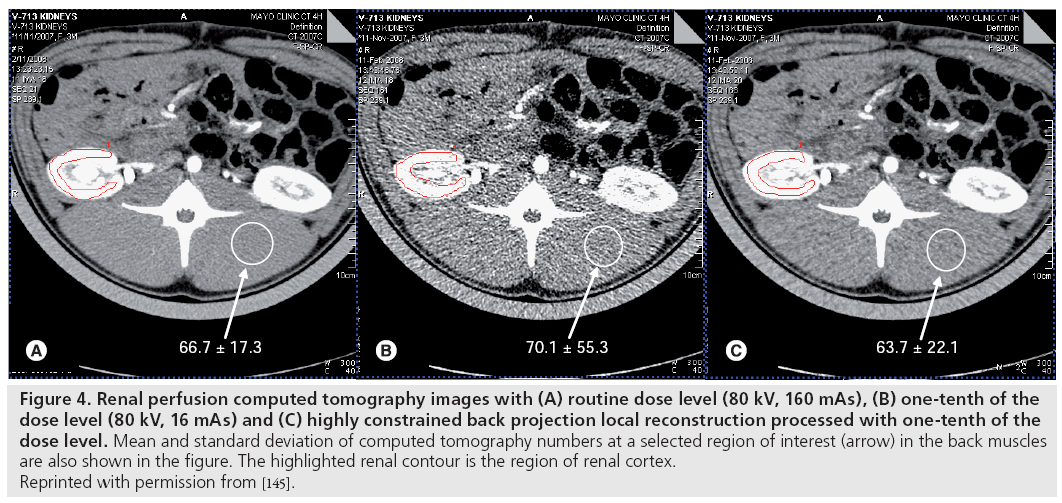

Recently, filtering techniques that exploit the spatial–temporal relationship of perfusion scans have been proposed. Representative algorithms of such filtering are the highly constrained back projection local reconstruction (HYPR-LR) and the multiband filtering (MBF) approaches [143–147]. Compared with other noise-reduction methods, HYPR-LR and MBF can greatly reduce the image noise while preserving edges (i.e., high spatial resolution) and requiring minimum computational effort [146]. However, in the case of significant motion, the accuracy of these algorithms may degrade

In an animal model, it was found that a tenfold dose reduction is possible for abdominal CT perfusion imaging, especially in highly perfused organs such as the kidneys where there is a strong iodine attenuation signal [145,146]. The dose level of the one-tenth typical dose acquisition is approximately 39.2 mGy (CTDIvol) for a consecutive 3-min stationary scan. The image quality of the one-tenth typical dose image, which was severely degraded by quantum noise, was improved significantly to nearly the same level of routine dose images by HYPR-LR or MBF noise-reduction algorithms, without substantial loss of quantitative accuracy (Figure 4) [145,146].

Figure 4: Renal perfusion computed tomography images with (A) routine dose level (80 kV, 160 mAs), (B) one-tenth of the

dose level (80 kV, 16 mAs) and (C) highly constrained back projection local reconstruction processed with one-tenth of the

dose level. Mean and standard deviation of computed tomography numbers at a selected region of interest (arrow) in the back muscles

are also shown in the figure. The highlighted renal contour is the region of renal cortex.

Reprinted with permission from [145].

Interventional CT

Computed tomography fluoroscopy, first developed and reported by Katada et al. [148,149] and Kato et al. [150], provides an effective image guidance tool for percutaneous interventional procedures [151–156]. It has been demonstrated that CT fluoroscopy is more effective compared with conventional CT-guided procedures [153,157]. However, one major concern of CT fluoroscopy is the high radiation dose when long scan times or repeated scans are performed over the same anatomic regions. This raises concerns regarding not only the dose to the patients, but also the dose to the radiation workers, as physicians usually stay inside the scan room during the interventional procedure. Extensive studies have been performed to measure and monitor the radiation dose delivered to patients and operators [150,156,158–167].

Scanning techniques used in CT fluoroscopy have a direct effect on radiation dose to both patients and operators. Dose reduction could be achieved by lowering tube potential, reducing tube current and exposure time, increasing slice thickness and limiting the scan range to only necessary anatomy. The tube current used in CT fluoroscopy is usually lower than that used in routine diagnosis, and studies have suggested that lower tube current should be used after the initial localization scan [151,160,161,168]. Although image quality was compromised by reducing tube current, it was still sufficient for visualizing needles and catheters during the interventional procedure.

In the original CT fluoroscopy design, the x-ray beam was continuously on during the needle operation [148–150]. An intermittent mode (or quick check mode) was later proposed [164] in which the x-ray beam was turned off during the needle insertion. A very short CT scan was then performed to check the needle position. This method delivers lower radiation dose compared with continuous mode [157,160,161,164].

To reduce radiation dose to radiation workers, proper shielding garments (lead apron, thyroid collars and leaded eyeglasses) must be worn for personnel inside the scan room [162,169] and staff should stay away from the scanner as far as possible, because dose decreases as the square of the distance from the x-ray source. To reduce dose to physicians’ hands, a variety of needle holders have been developed so that physician can position from outside the primary beam to avoid direct x-ray radiation [150,167].

Other radiation dose-reduction methods used in CT fluoroscopy include using angular beam modulation [170] or using a lead drape [159] or lead-free tungsten antimony shielding adjacent to the scanning plane [171] to reduce scatter radiation. Finally, operator experience has an effect on the dose to patients and personnel. As an operator becomes more experienced, both exposure time and radiation dose are expected to be reduced [153].

Future perspective

Individualizing scanning techniques

The attenuation level, anatomical structure and clinical indication are different for each individual patient. In order to use the minimum radiation dose to achieve a reasonable diagnostic image quality, the scanning techniques must take into account all of these patient-specific factors. AEC represents the most significant technical advancement towards the goal of optimizing scanning techniques for each individual patient [46–53]. Another major effort towards this goal is the design of different beam-shaping filters for different patient groups and clinical applications [44,45].

Along either of these two directions, there are still many potential improvements that promise further reduction of radiation dose. As described earlier, lower tube potential has been observed to result in various levels of dose reduction, especially for small patients. Further work is required to determine the optimal tube potential, which could become another significant technological advancement towards the goal of individualizing scanning techniques [66].

With the increased number of detector rows, each x-ray beam projection covers a larger area. Within each x-ray beam projection, the exposure level is controlled by the beam-shaping filter. Although most CT vendors provide multiple filter shapes to adapt to different body types and regions, such as head, body, cardiac and pediatric, the efficacy of such control is obviously suboptimal since it does not have the capability to adjust dynamically according to the attenuation level within the beam coverage, which is usually highly nonuniform, especially when the detector coverage is large. With the current scanner design, it appears to be very difficult to implement a dynamically adjustable beam filter. Distributed multisource technology, which is currently under investigation [172–174], offer more potential to modulate the x-ray beam dynamically.

Another important observation towards the goal of individualizing scanning techniques is that the image quality in the scanning field of view is not necessarily uniform, and the radiation dose distribution can be modulated so that the organ of interest is targeted and the doses to critical organs that are not of diagnostic interest are minimized. The concept of targeted region of interest imaging has already been proposed and experimentally demonstrated [175–177] based on new reconstruction algorithms that allow minimum data acquisition [178,179]. However, clinical implementations are not currently available.

Iterative reconstruction

Iterative reconstruction has been widely used in PET and single photon emission computed tomography for a long time [180–186]. Recently, it has received much attention in x-ray CT [187–193]. Compared with the conventional filtered backprojection (FBP) techniques, iterative reconstruction algorithms have the advantage in accurately modeling the system geometry, incorporating physical effects such as beam spectrum, noise, beam hardening effect, scatter and incomplete data sampling. Unlike FBP algorithms, in which all projection data are treated equally, different degrees of credibility among projection data are allowed [191]. More accurate noise models are used in iterative reconstruction based on photon statistics and other physical properties of the data acquisition [81,92,191], yielding lower image noise compared with FBP. Therefore, iterative reconstruction presents potential opportunities to reduce radiation dose in x-ray CT. Concurrently, iterative reconstruction can also improve spatial resolution [190,191] and reduce image artifacts such as beam hardening, windmill and metal artifacts [188,191,194]. Owing to the intrinsic difference in data handling between FBP and iterative reconstruction algorithms, images from iterative reconstruction might have a different appearance from those using FBP algorithms [191,195]. Careful investigation will be required on this aspect before it is widely used in clinic diagnosis.

Iterative reconstruction can also handle incomplete data sets better than the filtered backprojection algorithms. A new image reconstruction theory, compressed sensing, was recently introduced to allow accurate image reconstruction from incomplete data sets [196,197]. This theory and its extension have been applied in CT imaging [198–202], demonstrating that the number of projection views can be significantly reduced without sacrificing the image quality. Therefore, these algorithms have significant potentials for radiation dose reduction in CT.

High computation load has always been the biggest challenge for iterative reconstruction, which kept it from being clinically practical in CT imaging. However, software [186,203,204] and hardware [205–209] methods have been investigated to accelerate the iterative procedure. Using a graphical processing unit [205–208] and cell broadband engine [209], reconstruction times have been significantly shortened. With further advances in computation technology, it is expected that manufacturers will introduce iterative reconstruction into clinical practice in the near future.

Photon-counting detector

Detectors can be classified by operation mode – pulse mode and current mode [210]. In pulse mode, the signal from each interaction is processed individually. In current mode, the electrical signals from individual interactions are integrated together and averaged, forming a net current signal. When a detector is operated in current mode, all information regarding individual interactions is lost, neither the interaction rate nor the energies deposited by individual interactions can be determined. In pulse mode, however, it is possible to count each photon and record its energy individually provided the electronics are fast enough to detect, discriminate and record information on a photon-by-photon basis. These detectors are referred to as photon-counting detectors.

Photon-counting data acquisition has a number of advantages over charge-integrated acquisition. The photon-counting detector can improve the SNR due to the elimination of electronics noise and Swank noise [211]. An energy-resolving photon-counting detector can further improve SNR by assigning a more optimal, energydependent weighting factor to each detected photon [212–215]. An additional SNR improvement is possible for the energy-resolved photoncounting detectors by the rejection (complete or partial) of scattered radiation [216]. A Monte Carlo simulation study showed that the SNR improvement of breast CT imaging is 10–30% using plain photon counting (i.e., weighting factor = 1), and 20–90% using optimal weighting factors. The optimal weighting factor is dependent upon photon energy, material of interest and its thickness. For the low diagnostic x-ray energy (up to 40 keV), the optimal weighting factor is approximately 1/E3, independent from material and thickness owing to the strong photoelectric effect in this energy range [214,215]. The benefit of this SNR improvement can be directly used to reduce patient dose. Computer simulations showed that the dose can be reduced by a factor 1.5–2.5 for breast CT imaging [215,217].

Although photon-counting detectors with energy discrimination capabilities are widely used in radiation field surveys, health physics and nuclear medicine, they have not been considered in x-ray transmission imaging systems until recently, simply because the photon fluxes are too high to allow the detector to count each photon individually. Detectors used in current clinical CT scanners are exclusively scintillator detectors operated in current mode. With the introduction of faster detector electronics and more robust semiconductor detector materials, it will soon be possible to use photon-counting detectors in transmission imaging systems, such as mammography and CT scanners [218–221]; much progress has already been made on developing photoncounting detectors for mammography and CT systems [216,217,222–226]. Owing to the relatively low x-ray energy and photon flux, it is easier to develop a photon counting-based mammography. A dedicated photon-counting digital mammography system is already commercially available [217,223]. Approximately 35–45% of the dose can be saved, compared with a system employing a charge integrating detector with the same image quality [223]. Although a photon counting-based clinical CT is not available yet, micro-CT systems with photon-counting capability have been reported by several groups [219–221,224,226].

Conclusion

Keeping radiation dose as low as reasonably achievable (ALARA) is the guiding principle for a medically indicated CT examination. Many techniques and strategies are available for radiation dose reduction, as described in this article. The appropriate use of these strategies is critical to accomplish the goal of ALARA.

In addition to efforts to reduce the radiation dose from each CT scan, justification of the CT examination represents the other critical aspect of dose reduction, requiring guidelines from subspecialty for referring physicians and radiologists. Two extremes should be avoided: using unnecessary CT examinations (e.g., whole-body screening) while neglecting the potential risk, or placing too much emphasis on risk without considering the tremendous benefit of CT, which often far outweighs the potential risk. When the radiation dose per each examination is reduced, such as with the introduction of novel technologies, the associated risk is also reduced, resulting in further improvement of the benefit/risk ratio.

Acknowledgements

The authors would like to thank K Nunez for assistance with the manuscript preparation.

Financial & competing interests disclosure

L Yu and CH McCollough were partly supported by Grant EB007986 from the National Institute of Biomedical Imaging and Bioengineering. The content is solely the responsibility of the authors and does not necessarily represent the official views of the National Institute Of Biomedical Imaging and Bioengineering or the NIH. CH McCollough and JG Fletcher receive a research grant from Siemens Healthcare. The authors have no other relevant affiliations or financial involvement with any organization or entity with a financial interest in or financial conflict with the subject matter or materials discussed in the manuscript apart from those disclosed.

No writing assistance was utilized in the production of this manuscript.

References

Papers of special note have been highlighted as:

• • of considerable interest

- Hounsfield GN: Computerized transverse axial scanning (tomography): part I. description of system. Br. J. Radiol. 46, 1016–1022 (1973).

- Kalender WA, Seissler W, Klotz E, Vock P: Spiral volumetric CT with single-breath-hold technique, continuous transport, and continuous scanner rotation. Radiology 176, 181–183 (1990).

- Crawford CR, King KF: Computed tomography scanning with simultaneous patient translation. Med. Phys. 17, 967–982 (1990).

- McCollough CH, Zink FE: Performance evaluation of a multi-slice CT system. Med. Phys. 26, 2223–2230 (1999).

- Brenner DJ, Hall EJ: Computed tomography – an increasing source of radiation exposure. N. Engl. J. Med. 357, 2277–2284 (2007).

- Biologic Effects of Ionizing Radiation (BEIR) Report VII: Health risks from exposure to low levels of ionizing radiation. National Academies (2006).

- Einstein AJ, Henzlova MJ, Rajagopalan S: Estimating risk of cancer associated with radiation exposure from 64-slice computed tomography coronary angiography. JAMA 298, 317–323 (2007).

- Einstein AJ, Moser KW, Thompson RC, Cerqueira MD, Henzlova MJ: Radiation dose to patients from cardiac diagnostic imaging. Circulation 116, 1290–1305 (2007).

- McCollough CH, Guimarães L, Fletcher JG: In defense of body CT. AJR Am. J. Roentgenol. 193, 28–39 (2009).

- McCollough CH, Primak AN, Braun N, Kofler J, Yu L, Christner J: Strategies for reducing radiation dose in CT. Radiol. Clin. North Am. 47, 27–40 (2009).

- The Royal College of Radiologists: Making the Best Use of Clinical Radiology Services: Referral Guidelines (6th Edition). London (2007).

- International Electrotechnical Commission: Medical electrical equipment. Part 2–44: Particular requirements for the safety of x-ray equipment for computed tomography. IEC publication No. 60601–60602–60644. Ed. 2.1. International Electrotechnical Commission (IEC), Geneva, Switzerland (2002).

- Dixon RL: A new look at CT dose measurement: beyond CTDI. Med. Phys. 30, 1272–1280 (2003).

- Brenner DJ: It is time to retire the computed tomography dose index (CTDI) for CT quality assurance and dose optimization. For the proposition. Med. Phys. 33, 1189–1190 (2006).

- Perisinakis K, Damilakis J, Tzedakis A, Papadakis A, Theocharopoulos N, Gourtsoyiannis N: Determination of the weighted CT dose index in modern multi-detector CT scanners. Phys. Med. Biol. 52, 6485–6495 (2007).

- Boone JM: The trouble with CTDI 100. Med. Phys. 34, 1364–1371 (2007).

- International Commission on Radiological Protection: Managing patient dose from multi detector computed tomography (MDCT), (ICRP Publication 102). Ann. ICRP 37(1), 1–79 (2007).

- Jacobi W: The concept of the effective dose – a proposal for the combination of organ doses. Radiat. Environ. Biophys. 12, 101–109 (1975).

- International Commission on Radiological Protection: Recommendations of the International Commission on Radiological Protection (ICRP Publication 26). The International Commission on Radiological Protection, Oxford, UK (1977).

- McCollough CH, Schueler BA: Calculation of effective dose. Med. Phys. 27, 828–837 (2000).

- International Commission on Radiological Protection: 1990 Recommendations of the International Commission on Radiological Protection (Report 60). Ann. ICRP 21, 1–3 (1991).

- International Commission on Radiological Protection: 2007 recommendations of the International Commission on Radiological Protection (ICRP Publication 103). Ann. ICRP 37, 1–332 (2007).

- Cristy M: Mathematical Phantoms Representing Children of Various Ages for Use in Estimates of Internal Dose. Oak Ridge National Laboratory, TN, USA (1980).

- DeMarco JJ, Cagnon CH, Cody DD et al.: Estimating radiation doses from multidetector CT using Monte Carlo simulations: effects of different size voxelized patient models on magnitudes of organ and effective dose. Phys. Med. Biol. 52, 2583–2597 (2007).

- Perisinakis K, Tzedakis A, Damilakis J: On the use of Monte Carlo-derived dosimetric data in the estimation of patient dose from CT examinations. Med. Phys. 35, 2018–2028 (2008).

- Deak P, van M Straten, Shrimpton PC, Zankl M, Kalender WA: Validation of a Monte Carlo tool for patient-specific dose simulations in multi-slice computed tomography. Eur. Radiol. 18, 759–772 (2008).

- Myronakis M, Perisinakis K, Tzedakis A, Gourtsoyianni S, Damilakis J: Evaluation of a patient-specific Monte Carlo software for CT dosimetry. Radiat. Prot. Dosimetry 133, 248–255 (2009).

- Fletcher JG, Johnson CD, Welch TJ et al.: Optimization of CT colonography technique: prospective trial in 180 patients. Radiology 216, 704–711 (2000).

- Callstrom MR, Johnson CD, Fletcher JG et al.: CT colonography without cathartic preparation: feasibility study. Radiology 219, 693–698 (2001).

- Kalender WA, Buchenau S, Deak P et al.: Technical approaches to the optimisation of CT. Phys. Med. 24, 71–79 (2008).

- McCollough CH, Ulzheimer S, Halliburton SS, Shanneik K, White RD, Kalender WA: Coronary artery calcium: a multi-institutional, multimanufacturer international standard for quantification at cardiac CT. Radiology 243, 527–538 (2007).

- European Commission: European guidelines on quality criteria for computed tomography (EUR 16262 EN). European Commission, Luxembourg (2000).

- American College of Radiology: ACR Practice Guideline for Diagnostic Reference Levels in Medical X-ray Imaging (2002).

- American College of Radiology: ACR practice guideline for diagnostic reference levels in medical x-ray imaging. In: Practice Guidelines and Technical Standards. American College of Radiology, Reston, VA, USA 799–804 (2008).

- Hsieh J: Computed Tomography: Principles, Design, Artifacts, and Recent Advances. SPIE Press. Bellingham, WA, USA (2006).

- Perisinakis K, Papadakis AE, Damilakis J: The effect of x-ray beam quality and geometry on radiation utilization efficiency in multidetector CT imaging. Med. Phys. 36, 1258–1266 (2009).

- Siewerdsen JH, Jaffray DA: Cone-beam computed tomography with a flat-panel imager: magnitude and effects of x-ray scatter. Med. Phys. 28, 220–231 (2001).

- Boone JM, Cooper VN 3rd, Nemzek WR, McGahan JP, Seibert JA: Monte Carlo assessment of computed tomography dose to tissue adjacent to the scanned volume. Med. Phys. 27, 2393–2407 (2000).

- Tzedakis A, Damilakis J, Perisinakis K, Stratakis J, Gourtsoyiannis N: The effect of z overscanning on patient effective dose from multidetector helical computed tomography examinations. Med. Phys. 32, 1621–1629 (2005).

- van der Molen AJ, Geleijns J: Overranging in multisection CT: quantification and relative contribution to dose – comparison of four 16-section CT scanners. Radiology 242, 208–216 (2007).

- Tang X, Hsieh J, Dong F, Fan J, Toth TL: Minimization of over-ranging in helical volumetric CT via hybrid cone beam image reconstruction – benefits in dose efficiency. Med. Phys. 35, 3232–3238 (2008).

- Stierstorfer K, Kuhn U, Wolf H, Petersilka M, Suess C, Flohr T: Principle and performance of a dynamic collimation technique for spiral CT. Presented at: 93rd Radiological Society of North America Annual Meeting. Chicago, IL, USA, 25–30 November 2007.

- Christner J, Zavaletta V, Eusemann C, Walz-Flannigan AI, McCollough CH: Dose reduction in spiral ct using dynamically adjustable z-axis x-ray beam collimation. AJR Am. J. Roentgenol. (2009) (In Press).

- Toth TL, Cesmelia E, Ikhlefa A, Horiuchib T: Image quality and dose optimization using novel x-ray source filters tailored to patient size. Proc. Soc. Photo Opt. Instrum. Eng. 5745–5734 (2005).

- McCollough CH, Primak AN, Saba O et al.: Dose performance of a 64-channel dualsource CT scanner. Radiology 243, 775–784 (2007).

- Huda W, Bushong SC: In x-ray computed tomography, technique factors should be selected appropriate to patient size. Med. Phys. 28, 1543–1545 (2001).

- Frush DP: Strategies of dose reduction. Pediatr. Radiol. 32, 293–297 (2002).

- Huda W, Lieberman KA, Chang J, Roskopf ML: Patient size and x-ray technique factors in head computed tomography examinations. I. Radiation doses. Med. Phys. 31, 588–594 (2004).

- Huda W, Lieberman KA, Chang J, Roskopf ML: Patient size and x-ray technique factors in head computed tomography examinations. II. Image quality. Med. Phys. 31, 595–601 (2004).

- Nyman U, Ahl TL, Kristiansson M, Nilsson L, Wettemark S: Patientcircumference- adapted dose regulation in body computed tomography. A practical and flexible formula. Acta Radiol. 46, 396–406 (2005).

- McCollough CH: CT dose: how to measure, how to reduce. Health Phys. 95, 508–517 (2008).

- Gies M, Kalender WA, Wolf H, Suess C: Dose reduction in CT by anatomically adapted tube current modulation. I. Simulation studies. Med. Phys. 26, 2235–2247 (1999).

- Kalender WA, Wolf H, Suess C: Dose reduction in CT by anatomically adapted tube current modulation. II. Phantom measurements. Med. Phys. 26, 2248–2253 (1999).

- Haaga JR, Miraldi F, MacIntyre W, LiPuma JP, Bryan PJ, Wiesen E: The effect of mAs variation upon computed tomography image quality as evaluated by in vivo and in vitro studies. Radiology 138, 449–454 (1981).

- Kopka L, Funke M, Breiter N, Grabbe E: Anatomically adapted CT tube current: dose reduction and image quality in phantom and patient studies. Radiology 197(P), 292 (1995).

- McCollough CH, Bruesewitz MR, Kofler JM Jr: CT dose reduction and dose management tools: overview of available options. Radiographics 26, 503–512 (2006).

- Wilting JE, Zwartkruis A, van Leeuwen MS, Timmer J, Kamphuis AG, Feldberg M: A rational approach to dose reduction in CT: individualized scan protocols. Eur. Radiol. 11, 2627–2632 (2001).

- McCollough C, Yu L: Unintentional errors in CT imaging with use of a constant noise automatic exposure control (AEC) paradigm. Presented at: 94th Scientific Assembly and Annual Meeting, Radiological Society of North America. Chicago, IL, USA, 30 November–6 December 2008.

- Huda W, Scalzetti EM, Levin G: Technique factors and image quality as functions of patient weight at abdominal CT. Radiology 217, 430–435 (2000).

- Boone JM, Geraghty EM, Seibert JA, Wootton-Gorges SL: Dose reduction in pediatric CT: a rational approach. Radiology 228, 352–360 (2003).

- Siegel MJ, Schmidt B, Bradley D, Suess C, Hildebolt C: Radiation dose and image quality in pediatric CT: effect of technical factors and phantom size and shape. Radiology 233, 515–522 (2004).

- Cody DD, Moxley DM, Krugh KT, O’Daniel JC, Wagner LK, Eftekhari F: Strategies for formulating appropriate MDCT techniques when imaging the chest, abdomen, and pelvis in pediatric patients. AJR Am. J. Roentgenol. 182, 849–859 (2004).

- Ertl-Wagner BB, Hoffmann RT, Bruning R et al.: Multi-detector row CT angiography of the brain at various kilovoltage settings. Radiology 231, 528–535 (2004).

- Sigal-Cinqualbre AB, Hennequin R, Abada HT, Chen X, Paul JF: Low-kilovoltage multi-detector row chest CT in adults: feasibility and effect on image quality and iodine dose. Radiology 231, 169–174 (2004).

- Funama Y, Awai K, Nakayama Y et al.: Radiation dose reduction without degradation of low-contrast detectability at abdominal multisection CT with a low-tube voltage technique: phantom study. Radiology 237, 905–910 (2005).

- McCollough CH: Automatic exposure control in CT: are we done yet? Radiology 237, 755–756 (2005).

- Wintersperger B, Jakobs T, Herzog P et al.: Aorto–iliac multidetector-row CT angiography with low kV settings: improved vessel enhancement and simultaneous reduction of radiation dose. Eur. Radiol. 15, 334–341 (2005).

- Holmquist F, Nyman U: Eighty-peak kilovoltage 16-channel multidetector computed tomography and reduced contrast-medium doses tailored to body weight to diagnose pulmonary embolism in azotaemic patients. Eur. Radiol. 16, 1165–1176 (2006).

- Schueller-Weidekamm C, Schaefer- Prokop CM, Weber M, Herold CJ, Prokop M: CT angiography of pulmonary arteries to detect pulmonary embolism: improvement of vascular enhancement with low kilovoltage settings. Radiology 241, 899–907 (2006).

- Waaijer A, Prokop M, Velthuis BK, Bakker CJ, de Kort GA, van Leeuwen MS: Circle of Willis at CT angiography: dose reduction and image quality – reducing tube voltage and increasing tube current settings. Radiology 242, 832–839 (2007).

- Kalva SP, Sahani DV, Hahn PF, Saini S: Using the K-edge to improve contrast conspicuity and to lower radiation dose with a 16-MDCT: a phantom and human study. J. Comput. Assist. Tomogr. 30, 391–397 (2006).

- Nakayama Y, Awai K, Funama Y et al.: Abdominal CT with low tube voltage: preliminary observations about radiation dose, contrast enhancement, image quality, and noise. Radiology 237, 945–951 (2005).

- Schindera ST, Nelson RC, Mukundan S Jr et al.: Hypervascular liver tumors: low tube voltage, high tube current multi-detector row CT for enhanced detection – phantom study. Radiology 246, 125–132 (2008).

- Fletcher JG, Takahashi N, Hartman R et al.: Dual-energy and dual-source CT: is there a role in the abdomen and pelvis? Radiol. Clin. North Am. 47, 41–57 (2009).

- Kak AC, Slaney M: Principles of Computed Tomographic Imaging. SIAM, PA, USA (1987).

- Hsieh J: Adaptive streak artifact reduction in computed tomography resulting from excessive x-ray photon noise. Med. Phys. 25, 2139–2147 (1998).

- Kachelriess M, Watzke O, Kalendar WA: Generalized multi-dimensional adaptive filtering for conventional and spiral single-slice, multi-slice, and cone-beam CT. Med. Phys. 28, 475–490 (2001).

- Demirkaya O: Reduction of noise and image artifacts in computed tomography by nonlinear filtration of projection images. Proc. Soc. Photo Opt. Instrum. Eng. 4322, 917–923 (2001).

- Li T, Li X, Wang J et al.: Nonlinear sinogram smoothing for low-dose x-ray CT. IEEE Trans. Nucl. Sci. 51, 2505–2513 (2004).

- La Rivière PJ: Penalized-likelihood sinogram smoothing for low-dose CT. Med. Phys. 32, 1676–1683 (2005).

- Wang J, Li T, Lu H, Liang Z: Penalized weighted least-squares approach to sinogram noise reduction and image reconstruction for low-dose x-ray computed tomography. IEEE Trans. Med. Imaging 25, 1272–1283 (2006).

- La Rivière PJ, Bian J, Vargas PA: Penalizedlikelihood sinogram restoration for computed tomography. IEEE Trans. Med. Imaging 25, 1022–1036 (2006).

- Bai M, Chen J, Raupach R, Suess C, Tao Y, Peng M: Effect of nonlinear threedimensional optimized reconstruction algorithm filter on image quality and radiation dose: validation on phantoms. Med. Phys. 36, 95–97 (2009).

- Yu L, Manduca A, Trzasko JD et al.: Sinogram smoothing with bilateral filtering for low-dose CT. Proc. Soc. Photo Opt. Instrum. Eng. 6913, 29 (2008).

- Stierstorfer K, Flohr T, Bruder H: Segmented multiple plane reconstruction: a novel approximate reconstruction scheme for multi-slice spiral CT. Phys. Med. Biol. 47, 2571–2581 (2002).

- Bontus C, Kohler T, Proksa R: EnPIT: Filtered back-projection algorithm for helical CT using an n-Pi acquisition. IEEE Trans. Med. Imaging 24, 977–986 (2005).

- Schondube H, Stierstorfer K, Noo F: Accurate helical cone-beam CT reconstruction with redundant data. Phys. Med. Biol. 54, 4625–4644 (2009).

- Mayo JR, Whittall KP, Leung AN et al.: Simulated dose reduction in conventional chest CT: validation study. Radiology 202, 453–457 (1997).

- van Gelder RE, Venema HW, Serlie IW et al.: CT colonography at different radiation dose levels: feasibility of dose reduction. Radiology 224, 25–33 (2002).

- Frush DP, Slack CC, Hollingsworth CL et al.: Computer-simulated radiation dose reduction for abdominal multidetector CT of pediatric patients. AJR Am. J. Roentgenol. 179, 1107–1113 (2002).

- Thomas K, Rittenhouse R, Yu L et al.: Creation and implementation of reduced-kv pediatric body CT protocols. Presented at: 94th Scientific Assembly and Annual Meeting, Radiological Society of North America. Chicago, IL, USA, 30 November–5 December 2008.

- Whiting BR, Massoumzadeh P, Earl OA, O’Sullivan JA, Snyder DL, Williamson JF: Properties of preprocessed sinogram data in x-ray computed tomography. Med. Phys. 33, 3290–3303 (2006).

- Kalender WA: X-ray computed tomography. Phys. Med. Biol. 51, R29–R43 (2006).

- Paterson A, Frush DP: Dose reduction in paediatric MDCT: general principles. Clin. Radiol. 62, 507–517 (2007). Brenner D, Elliston C, Hall E, Berdon W: Estimated risks of radiation-induced fatal cancer from pediatric CT. AJR Am. J. Roentgenol. 176, 289–296 (2001).

- Arch ME, Frush DP: Pediatric body MDCT: a 5-year follow-up survey of scanning parameters used by pediatric radiologists. AJR Am. J. Roentgenol. 191, 611–617 (2008).

- McCollough CH: Optimization of multidetector array CT acquisition parameters for CT colonography. Abdom. Imaging 27, 253–259 (2002).

- Flohr TG, Schoepf UJ, Ohnesorge BM: Chasing the heart: new developments for cardiac CT. J. Thorac. Imaging 22, 4–16 (2007).

- Hendel RC, Patel MR, Kramer CM et al.: ACCF/ACR/SCCT/SCMR/ASNC/NASCI/ SCAI/SIR 2006 appropriateness criteria for cardiac computed tomography and cardiac magnetic resonance imaging: a report of the American College of Cardiology Foundation Quality Strategic Directions Committee Appropriateness Criteria Working Group, American College of Radiology, Society of Cardiovascular Computed Tomography, Society for Cardiovascular Magnetic Resonance, American Society of Nuclear Cardiology, North American Society for Cardiac Imaging, Society for Cardiovascular Angiography and Interventions, and Society of Interventional Radiology. J. Am. Coll. Cardiol. 48, 1475–1497 (2006).

- Gibbons RJ, Araoz PA, Williamson EE: The year in cardiac imaging. J. Am. Coll. Cardiol. 53, 54–70 (2009).

- Achenbach S: Cardiac CT: state of the art for the detection of coronary arterial stenosis. J. Cardiovasc. Comput. Tomogr. 1, 3–20 (2007).

- Marano R, Liguori C, Rinaldi P et al.: Coronary artery bypass grafts and MDCT imaging: what to know and what to look for. Eur. Radiol. 17, 3166–3178 (2007).

- Brodoefel H, Kramer U, Reimann A et al.: Dual-source CT with improved temporal resolution in assessment of left ventricular function: a pilot study. AJR Am. J. Roentgenol. 189, 1064–1070 (2007).

- Alkadhi H, Desbiolles L, Husmann L et al.: Aortic regurgitation: assessment with 64-section CT. Radiology 245, 111–121 (2007).

- Nieman K, Shapiro MD, Ferencik M et al.: Reperfused myocardial infarction: contrast-enhanced 64-Section CT in comparison to MR imaging. Radiology 247, 49–56 (2008).

- Ruzsics B, Lee H, Zwerner PL, Gebregziabher M, Costello P, Schoepf UJ: Dual-energy CT of the heart for diagnosing coronary artery stenosis and myocardial ischemia-initial experience. Eur. Radiol. 18, 2414–2424 (2008).

- Kachelriess M, Kalender WA: Electrocardiogram-correlated image reconstruction from subsecond spiral computed tomography scans of the heart. Med. Phys. 25, 2417–2431 (1998).

- Taguchi K, Anno H: High temporal resolution for multislice helical computed tomography. Med. Phys. 27, 861–872 (2000).

- Ohnesorge B, Flohr T, Becker C et al.: Cardiac imaging by means of electrocardiographically gated multisection spiral CT: initial experience. Radiology 217, 564–571 (2000).

- Alkadhi H: Radiation dose of cardiac CT – what is the evidence? Eur. Radiol. 19, 1311–1315 (2009).

- Hausleiter J, Meyer T, Hermann F et al.: Estimated radiation dose associated with cardiac CT angiography. JAMA 301, 500–507 (2009).

- Jakobs TF, Becker CR, Ohnesorge B et al.: Multislice helical CT of the heart with retrospective ECG gating: reduction of radiation exposure by ECG-controlled tube current modulation. Eur. Radiol. 12, 1081–1086 (2002).

- Weustink AC, Mollet NR, Pugliese F et al.: Optimal electrocardiographic pulsing windows and heart rate: effect on image quality and radiation exposure at dual-source coronary CT angiography. Radiology 248, 792–798 (2008).

- Flohr TG, McCollough CH, Bruder H et al.: First performance evaluation of a dual-source CT (DSCT) system. Eur. Radiol. 16, 256–268 (2006).

- Hsieh J, Londt J, Vass M, Li J, Tang X, Okerlund D: Step-and-shoot data acquisition and reconstruction for cardiac x-ray computed tomography. Med. Phys. 33, 4236–4248 (2006).

- Stolzmann P, Leschka S, Scheffel H et al.: Dual-source CT in step-and-shoot mode: noninvasive coronary angiography with low radiation dose. Radiology 249, 71–80 (2008).

- Scheffel H, Alkadhi H, Leschka S et al.: Low-dose CT coronary angiography in the step-and-shoot mode: diagnostic performance. Heart 94, 1132–1137 (2008).

- Hein PA, Romano VC, Lembcke A, May J, Rogalla P: Initial experience with a chest pain protocol using 320-slice volume MDCT. Eur. Radiol. 19, 1148–1155 (2009).

- Dewey M, Zimmermann E, Laule M, Rutsch W, Hamm B: Three-vessel coronary artery disease examined with 320-slice computed tomography coronary angiography. Eur. Heart J. 29, 1669 (2008).

- Achenbach S, Marwan M, Schepis T et al.: High-pitch spiral acquisition: a new scan mode for coronary CT angiography. J. Cardiovasc. Comput. Tomogr. 3, 117–121 (2009).

- Leschka S, Stolzmann P, Schmid FT et al.: Low kilovoltage cardiac dual-source CT: attenuation, noise, and radiation dose. Eur. Radiol. 18, 1809–1817 (2008).

- Hausleiter J, Meyer T, Hadamitzky M et al.: Radiation dose estimates from cardiac multislice computed tomography in daily practice: impact of different scanning protocols on effective dose estimates. Circulation 113, 1305–1310 (2006).

- Alvarez RE, Macovski A: Energy-selective reconstructions in x-ray computed tomography. Phys. Med. Biol. 21, 733–744 (1976).